A/B tests

A/B test is a powerful tool of marketing. It can be used to evaluate how effective your message is, and how leads react to a different version of it. Imagine your goal is to understand what works better in an email: "Hello" or "Hi"?

Any message can have an A/B test. You can check how changes in a message (and even in JS) can affect the conversion rate.

Moreover, you can compare different types of communications: chat with a pop-up, big pop-up with a small one, a chat message and JavaScript, etc. Check what format works best for your message.

💡 A/B test setting

Any triggered message can have A/B test. You can set A/B test for created and launched triggered messages. Let's see how to create A/B test in a new message.

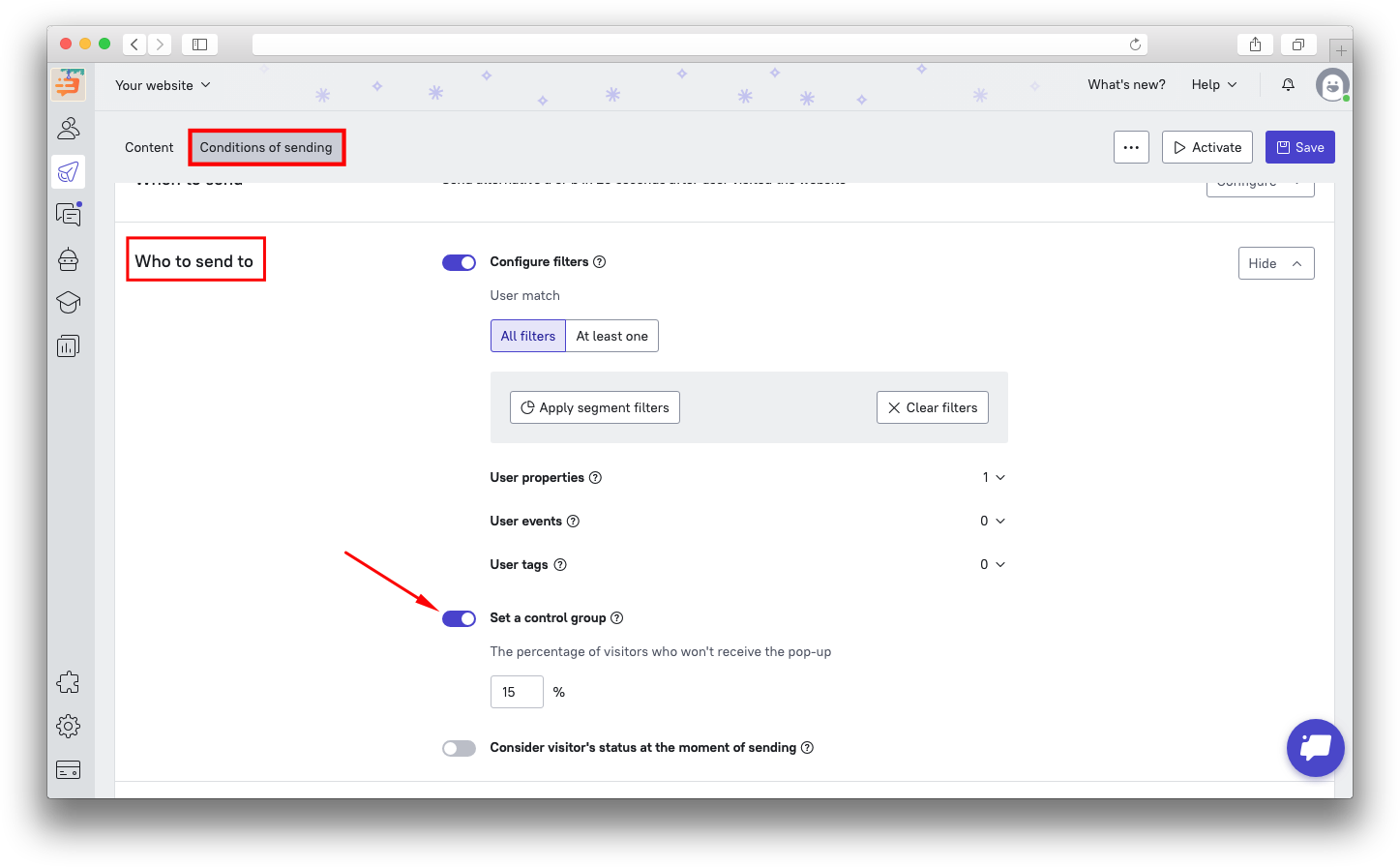

Create a new message, choose the trigger, audience and sending conditions. You can indicate percent of control group (these leads won't receive this message at all) in sending conditions.

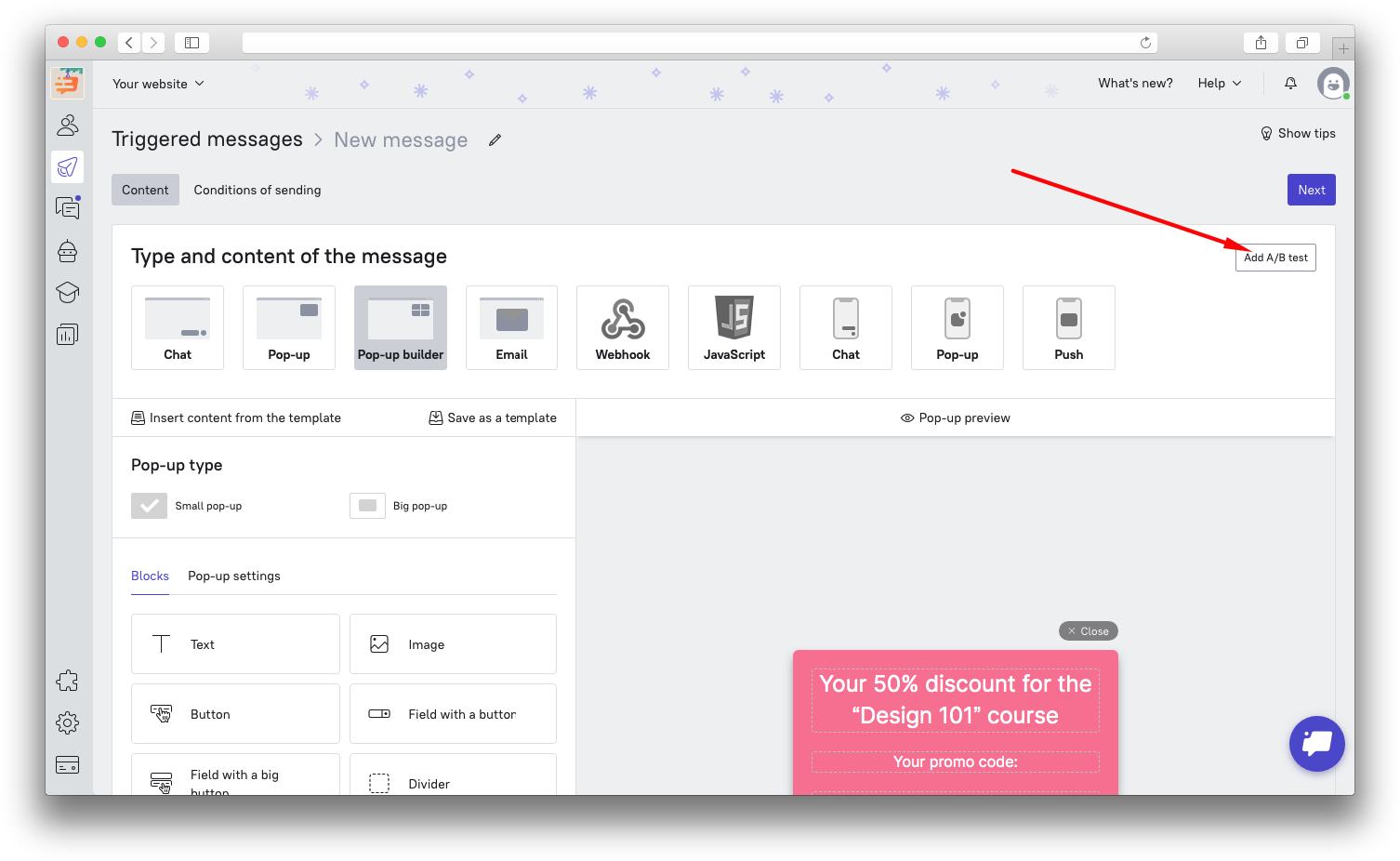

Click on "Add A/B test" while setting the content of the message. You'll see that there are now two versions of the same message, Alternative A and Alternative B.

You can now set up contents for both alternatives. When both versions are ready, you can move to the Conditions of sending.

💡 Control group

Control group will help you understand how leads act without getting this message. Select the percentage of leads who will not see the message.

You can select control group in "Conditions of sending" - "Who to send to". By default it is 10%. You can set your own value.

❗ Important: The percentage of control group can be set even if you do not configure A/B test. In this case you will see how your leads act without getting any triggered message at all.

💡 Logic of A/B test and control group

Example: you create two versions of message (run A/B test) which are sent to all leads from London in 10 minutes after signing up.

Leads are selected among all those who signed up (trigger event) in 10 minutes (timeout) from London (audience). If you set a control group, then a selected percentage of leads won't receive this triggered message. A/B test doesn't affect control group. If there is no A/B test, then the rest of audience will receive a message. If A/B test is configured, then the rest of audience will be randomly divided 50/50. Leads in control group won't see the message at all. The first group will see version A and the second one will see version B.

❗ Important: leads from audience are selected randomly, so the order in which they receive the message may differ from A-B-A-B-A-... There are more chances that the ratio will be 50:50 when the audience is big.

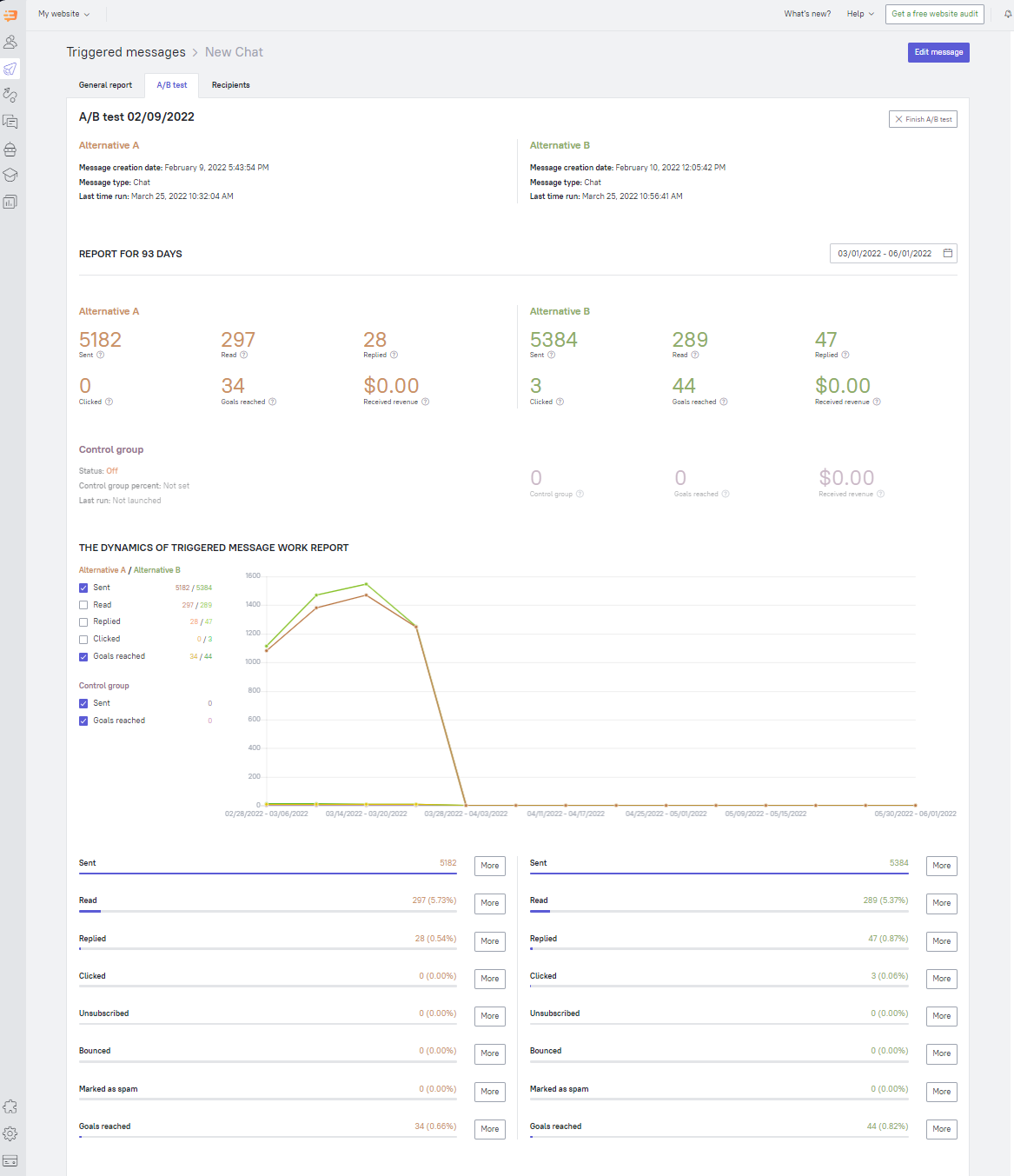

💡 Statistics

Numbers mean everything to Dashly. Numbers help you determine what message is more preferable to send. Every message can have its own success indicators. What message to choose: which has more clicks or which is opened more times? The decision is up to you. You can also decide when the collected data is enough to make a conclusion and to stop A/B test.

Statistics will help you to check the following: number of sent messages, how many of them are delivered, read, replied to, in how many messages leads clicked on a link, marked as spam and unsubscribed.

Learn more about how statistics work in this article.

Data is displayed side by side so you could compare numbers and charts as well.

Moreover, you can even check how much money is brought by any variant (and control group). To do this just indicate a goal (the next step after Form and Content). This event should be done by a lead after receiving an triggered message (e.g. buy something). Statistics show you the conversion of goal achievement if you set the goal value and income:

💡 A/B test disabling

Once you collect enough statistics and choose the best option, you can disable A/B test.

To disable A/B test, click Finish A/B test button and select the option which you want to disable.

Example: you are not satisfied with version B, then select it and click on "Finish A/B test". A/B test statistics will be archived, you can check it at any time. Version A will be still working and will be sent to all leads who match the filters of this triggered messages.

Once you need to compare version A with another version you can create a new A/B test. One message can have unlimited number of A/B tests. You can check the history of all closed A/B tests in archive.

❗ Important: if you disable triggered message and then launch it again, A/B test will be resumed.